Understanding your live website test results

Last updated: April 19, 2026

As testers complete your live maze, you'll start seeing insights in the Results dashboard.

The results for your live website testing blocks are displayed in three sections:

Before you start

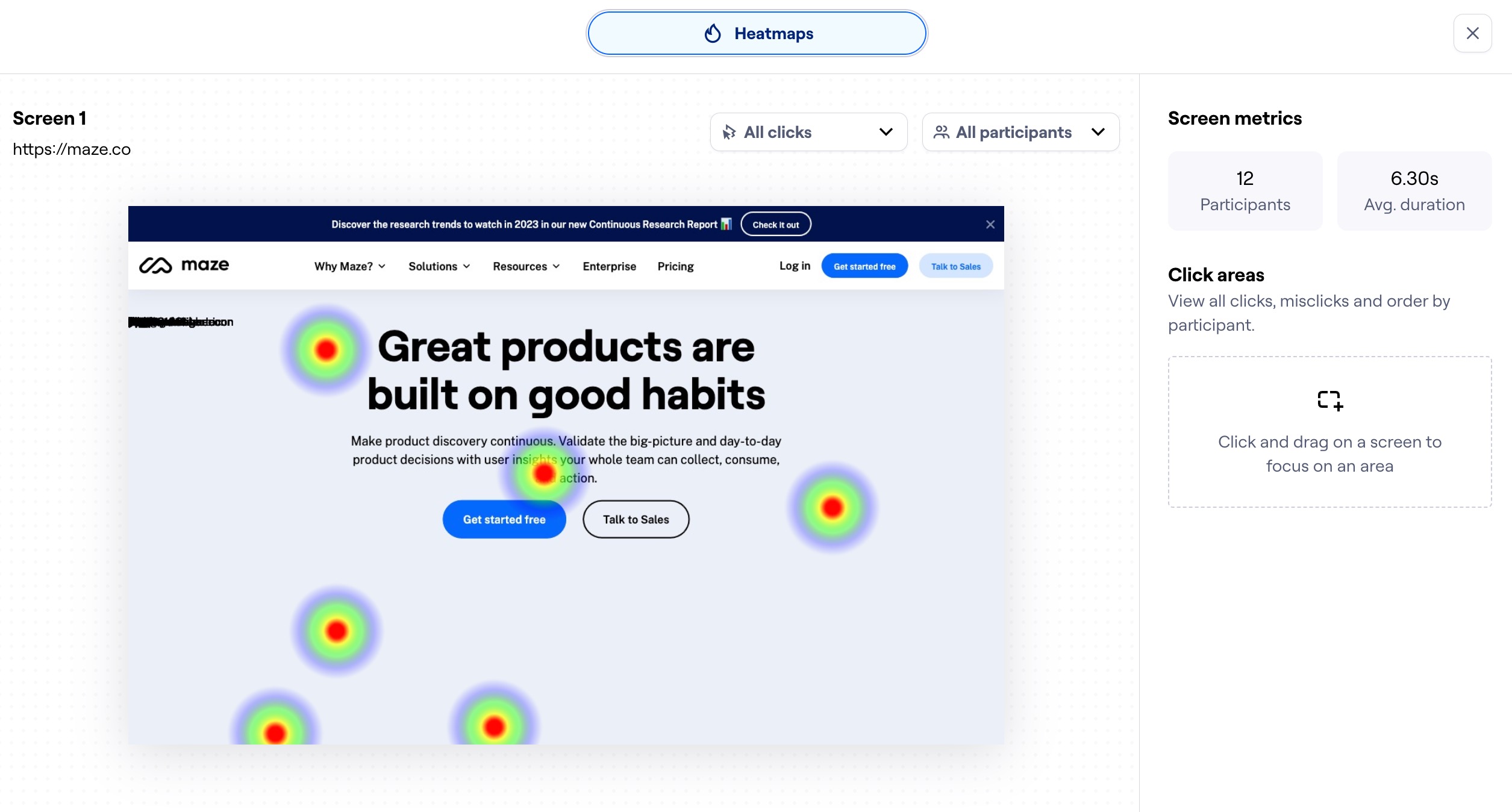

- Clicks on a website test are captured as heatmaps. Non-click interactions (e.g. hover, drag) aren't shown on the heatmap.

- We only take one snapshot as the user enters the page for the first time. For this reason, overlays and modals aren't captured in the heatmap.

Usability metrics

In this section, you'll see a summary containing the following metrics:

- Mission completed: Percentage of testers who completed the mission

- Expected paths followed: Percentage of testers who followed an expected path

- Mission unfinished: Percentage of testers who have abandoned the mission

- Average duration: Average time testers took to complete the mission

Mission paths

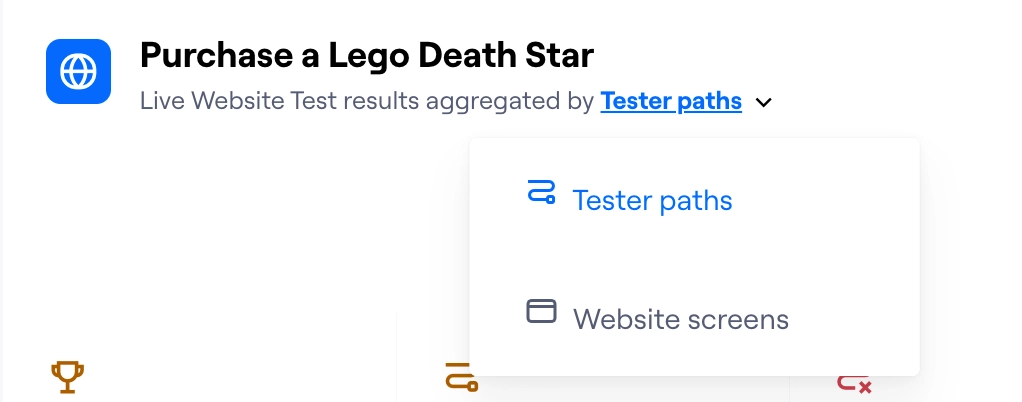

Results data is aggregated by path or by screen. To toggle between both, click the Live website test results aggregated by dropdown.

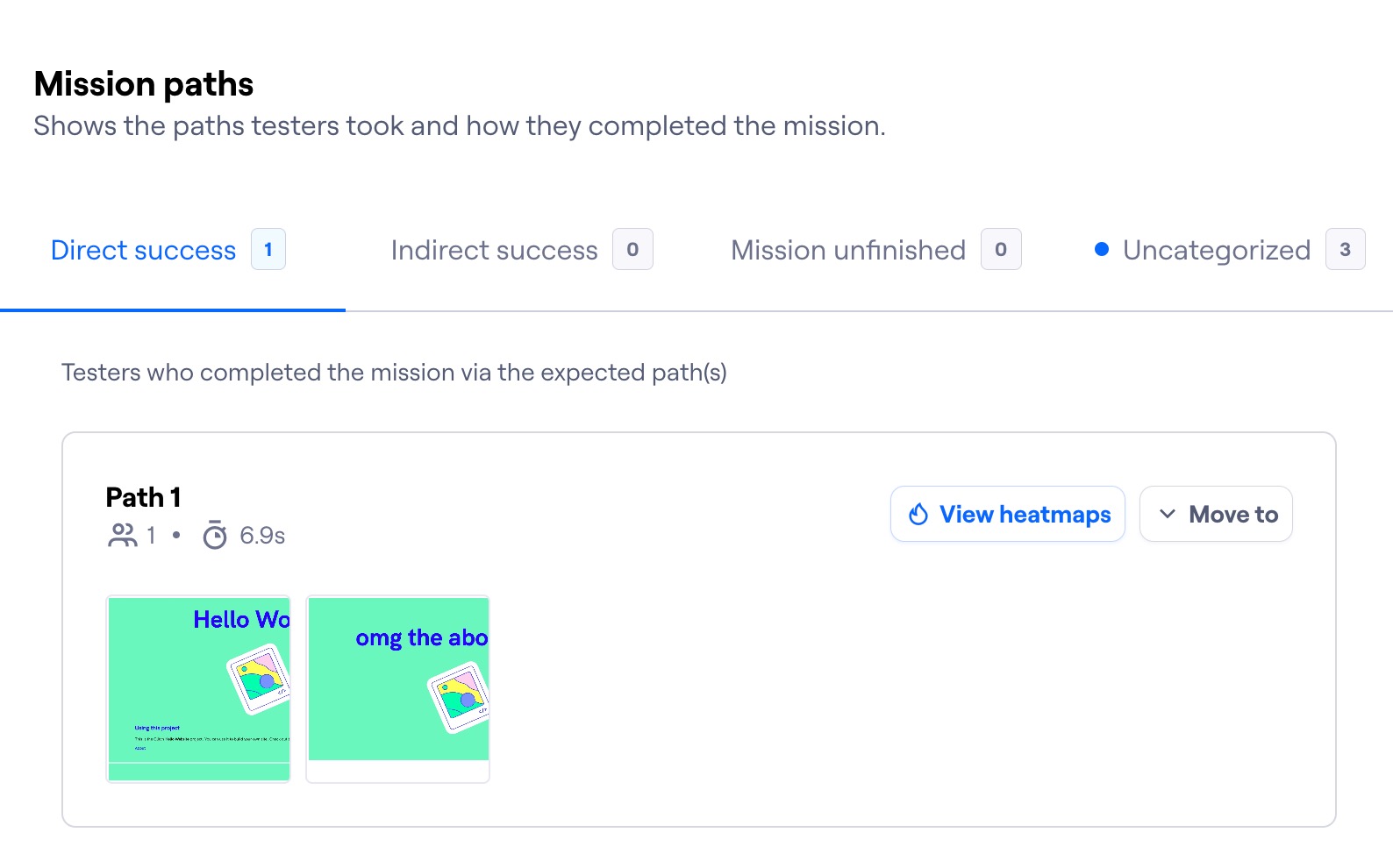

Mission path analysis

When you open your Results dashboard, you'll see the aggregated paths view by default. This view groups the paths taken by testers based on the mission outcome:

- Direct success: Testers who completed the mission via the expected path(s).

- Indirect success: Testers who completed the mission via unexpected path(s), but still reached the final page.

- Mission unfinished: Testers who abandoned the mission, or finished it on the wrong screen.

- Uncategorized: You may sometimes see an Uncategorized tab. It groups all tester paths that haven't been automatically assigned to a success category. Learn how to manually categorize paths

Under each tab, you'll see the paths corresponding to each outcome, including the number of testers who met that outcome.

Hover over each path and click View heatmaps to see the combined heatmaps from the testers who took that path. Learn more about heatmaps in Maze

If you've enabled Clips, you'll also be able to see the recordings here.

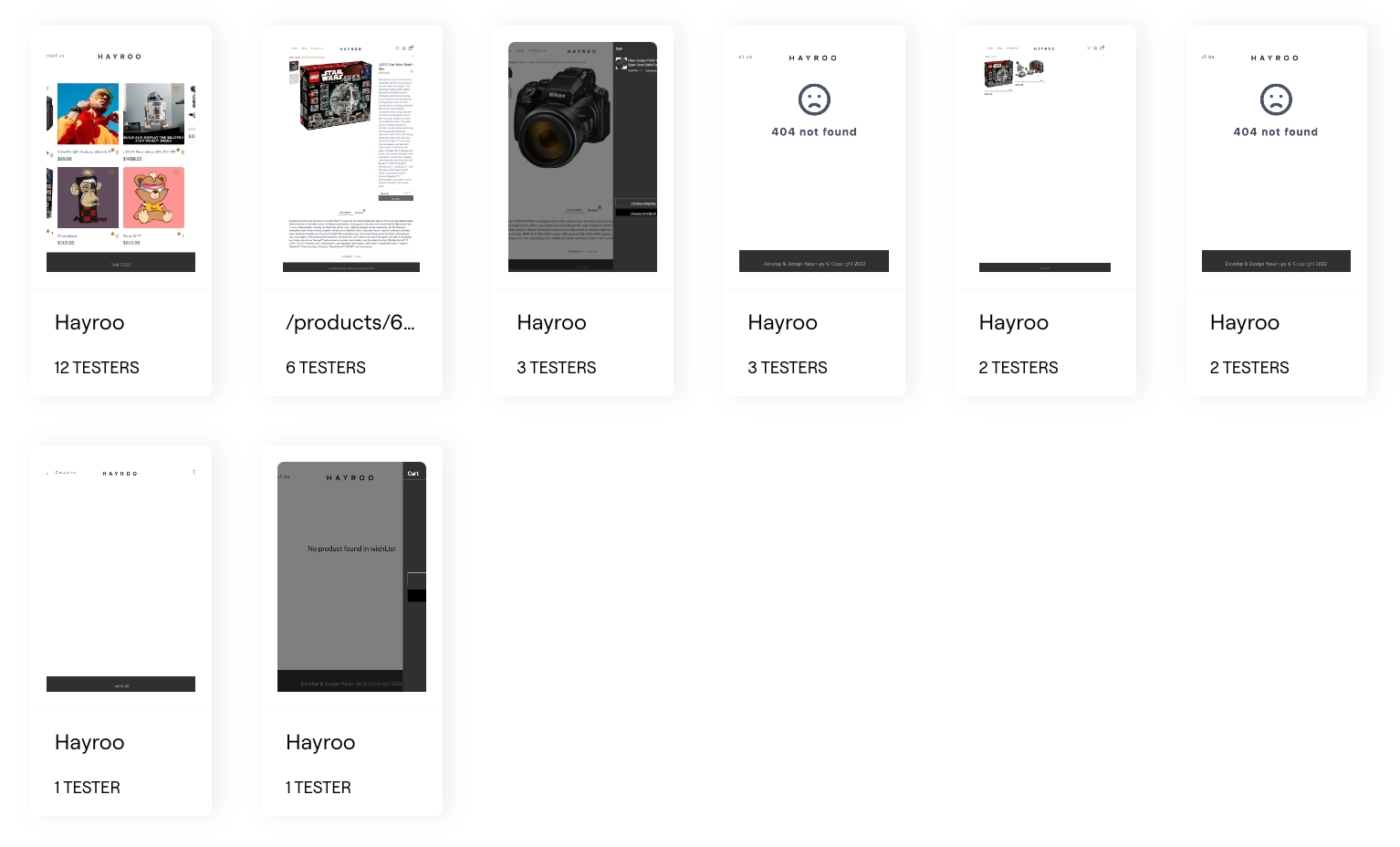

Screen analysis

Results data can also be grouped by the website screens your testers saw during the test.

Click each screen to see the heatmap of your testers' interactions for that screen.

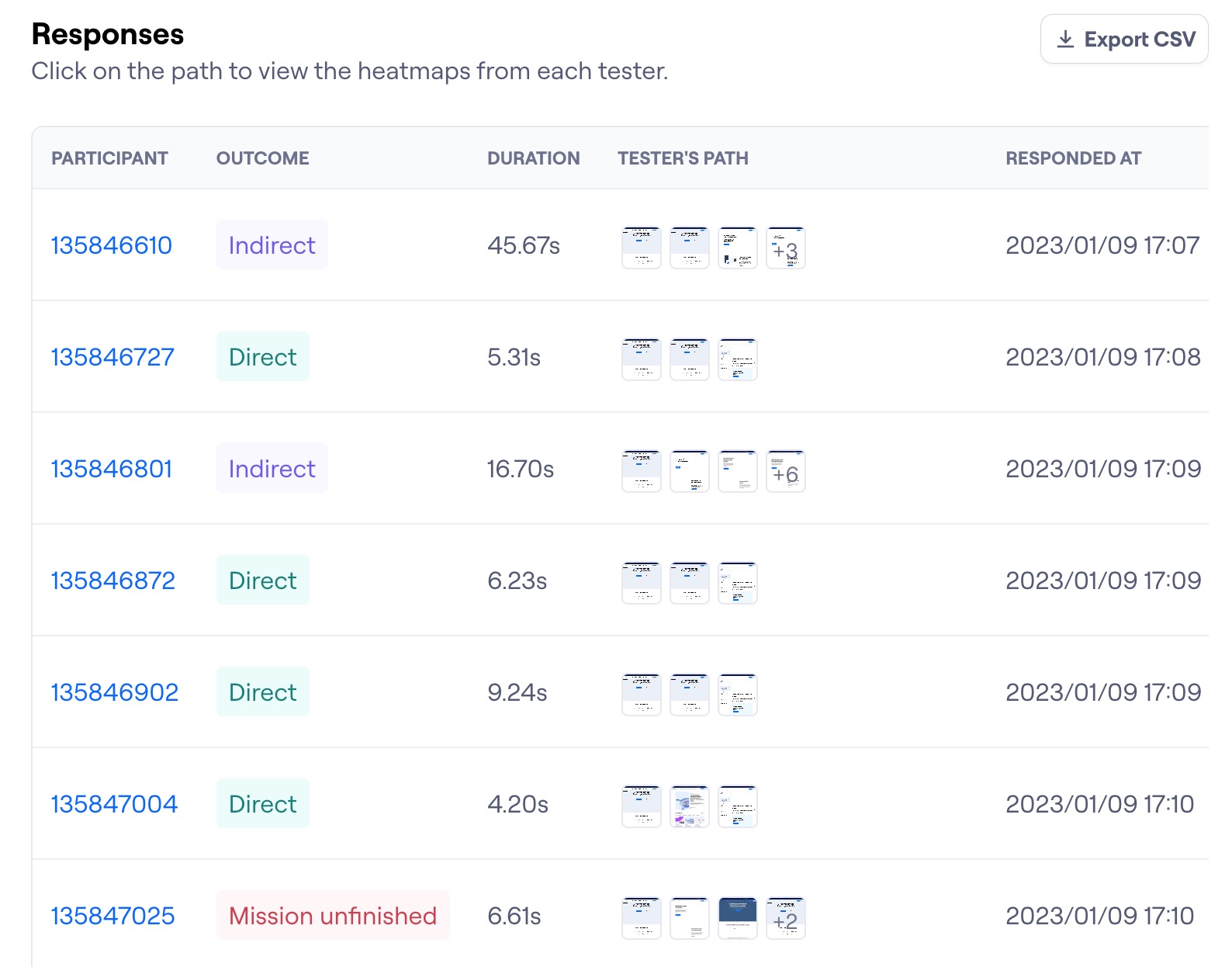

Responses

Under Responses, you can see information about each testing session:

- Clips: Whether there are saved screen and/or audio and video recordings from the mission

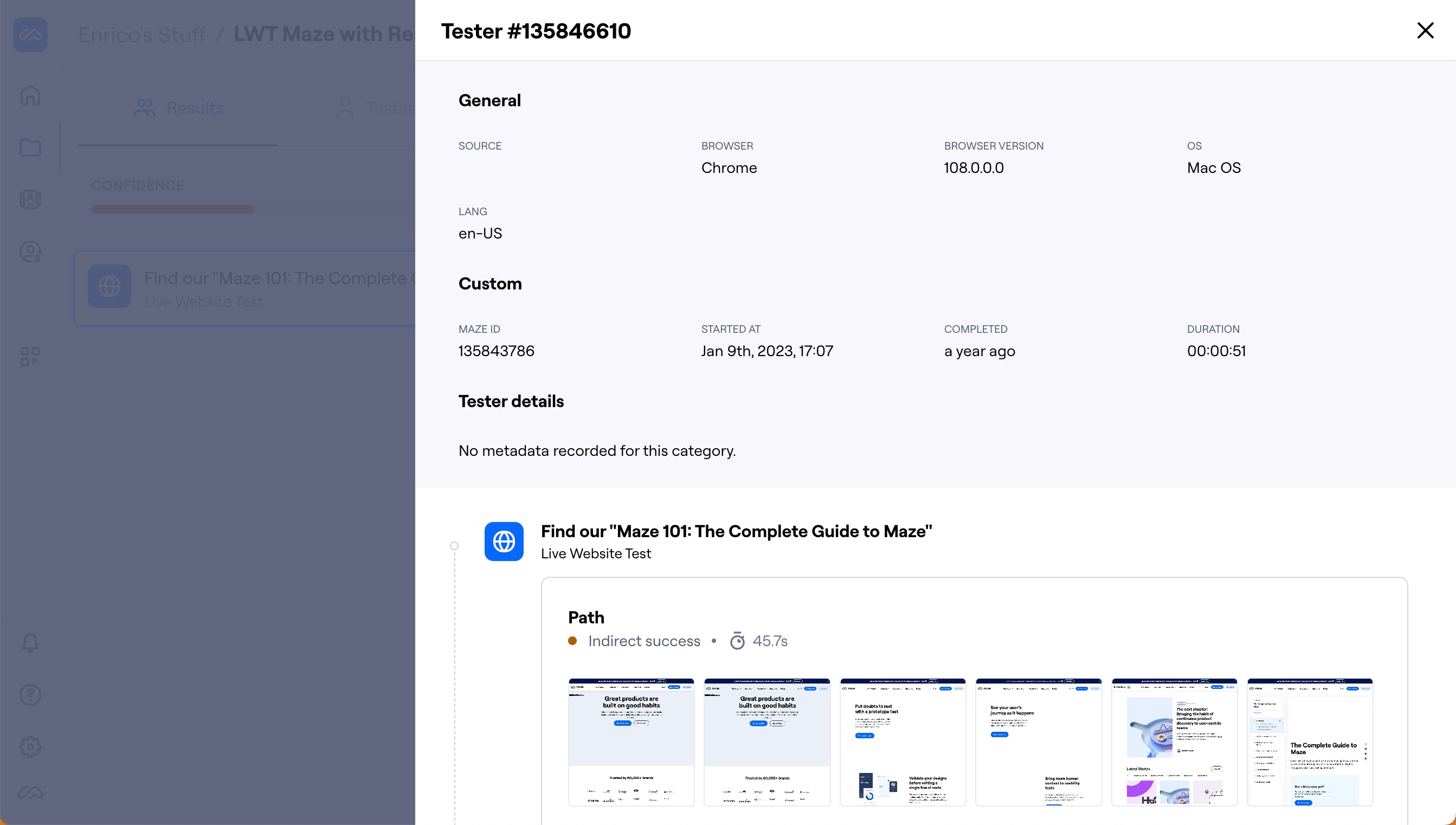

- Participant: The individual tester's ID

- Outcome: Whether the mission resulted in a direct or indirect success, or the tester gave up

- Duration: How long it took the tester to complete the mission

- Tester path: A thumbnail preview of the first four screens of the path the tester took

- Responded at: The date and time of the test

Click each row to see the individual tester's responses to the maze, as well as the specific path they've taken on the mission.

How many testers do I need?

The number of testers you need depends on the type of research you're running. We recommend testing with at least 20 testers for unmoderated studies and 5–10 for moderated studies.

Learn more about the ideal number of testers

Filter your results

When reviewing your results, it can be helpful to refine the data to help you answer specific questions.

Filters allow you to narrow down results data based on participant metadata or by block answer/outcome.

Learn more about results filtering

Reports

Maze reports make it easy to analyze, share, and present your results data. Reports are automatically generated for every live maze tested with at least one tester.

Export your results

To export your results data, open the More menu (•••) and click Export as CSV file or Export as image.